For most companies, the journey to analytics starts with a crime.

Not corporate espionage or anything that'd make headlines — just the quiet abuse of your transactional database to run nightly ETL jobs that sound innocent until you realize you're hammering the same tables your app depends on to stay alive.

The Crime Story

Imagine we're building a simple web application. It could be a CRM, ERP, a banking backend, billing system, or even POS terminals — pick your project.

At first, it's clean. Compact. A tidy little monolith backed by a relational database — PostgreSQL (maybe MySQL if you're feeling reckless). It does what it's supposed to — handles inserts, updates and deletes. Users log in, click around, and the system responds. Nothing fancy.

But sooner or later, someone outside the engineering team starts asking questions. Questions like, "How many active users do we have?" or "What's our average time to close a ticket?" or the classic: "Why doesn't the report match what I see in the app?"

And just like that, the analyst shows up.

Suddenly, your transactional database isn't just for transactions. It's now the source of truth for everything. Everyone wants a piece — BI tools, ML pipelines, reporting systems, etc. Business wants data insights (whatever that means today). Ops wants real-time dashboards. Management wants today's metrics yesterday.

Data eventually becomes mission-critical, so the company starts running regular reports. Decisions have to come from somewhere, and that somewhere is your already-struggling production database.

The thing is, these reports? They're heavy — they run complex queries. They pull wide joins. They scan from gigabytes to petabytes of junk data. These queries run nightly — or worse, hourly — and they drag your transactional system down with them.

They don't just slow things down. They burn CPU, eat memory, and chew through disk I/O. And that's before we even get into the network traffic from exporting all that data. Meanwhile, your business users are flying blind because the data they're waiting for only refreshes once a day, which means you're always making decisions based on what happened yesterday, not today.

Sure, if you've got a quiet window — say 2 AM to 5 AM — and your nightly dump fits in that slot, you might get away with it. But what if your app has no off-hours? What if your data grows, and your window shrinks?

What happens when your extract job starts overlapping with business hours?

That's when the wheels come off.

Change Data Capture

Change Data Capture (CDC) is exactly what it sounds like: capturing only the changes. Not full tables. Not yesterday's copy of everything. Just the deltas — inserts, updates, deletes — the meaningful stuff.

Here's the idea: CDC watches your source system, sees what changed, and replicates just those changes to a target — usually a data warehouse or some other analytics store. From there, you do your reporting, analysis, forecasting, machine learning, late-night executive dashboards — you name it, all without touching the operational database.

So your users keep working, your application keeps responding, and your analysts get fresh numbers without breaking anything. Everybody wins.

At its core, CDC provides a historical stream of changes to your tables. It doesn't just say, "Hey, something changed". It says what changed — which row, which field, old value, new value. All of it. In a format that downstream systems can ingest and replay. That's your delta.

Now you might ask, does this really solve our earlier problems?

The answer? Absolutely.

First, because you're not hammering the source databasre with huge queries, your load always stays consistent. No more scary spikes from batch jobs kicking off at 2 AM that end up blocking half your transactions.

Second, the data isn't being sent in giant blobs. It's flowing in constantly, in small, digestible chunks. That means fewer network headaches, fewer retries, less infrastructure bloat. You don't need to architect a mega-pipeline just to ship a few updates.

And finally — maybe most importantly — the data in your warehouse is fresh. Not "updated every 24 hours" fresh. Actually fresh. Like, real-time dashboard fresh. That's a huge win for decision-making. You're no longer looking in the rearview mirror, you're getting data as things happen.

That's the power of CDC.

Extracting the Delta

Let's talk about delta extraction. It's one of those behind-the-scenes tasks that powers everything from analytical data warehouses to CRMs, MDM hubs to disaster recovery setups, and even migration projects. If you're moving data between systems and you don't want to move everything, delta is your friend.

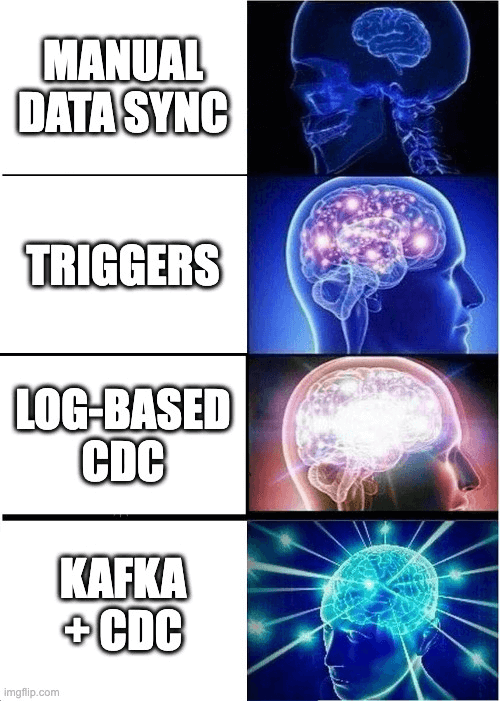

In the beginning, we solved this with timestamps. You add an updated_at column on the table, and now you have a crude way to find what's changed. "Just give me the rows where updated_at > last_exported_at". Simple, right?

Well... not quite.

Because to find those rows, you still have to scan the whole table. And if your table changes frequently (which is often the case), that scan starts getting expensive. It's slow. It hits the disk hard. And worst of all, it's brittle — if a row gets updated twice before your next export, you might miss it. Congrats, you've got data loss.

| id | first_name | last_name | timestamp | |

|---|---|---|---|---|

| 1 | John | Doe | example@gmail.com | 2022-02-04 16:11:34.944447 |

Then someone gets clever: "What if we version the rows?" Now you're storing every version of every record, forever. It works — sort of. But at what cost? You're bloating your tables. You're writing custom logic to track history.

Also, let's not forget: this usually requires changing the application itself. And sometimes, that's just not an option. Either you don't own the app's code, or you don't want to risk touching the 10-year-old COBOL code that's holding your company together.

| id | first_name | last_name | version | |

|---|---|---|---|---|

| 1 | John | Doe | example@gmail.com | 2 |

Eventually, we arrive at triggers.

A trigger is a special type of stored procedure in the database that fires when something changes in a table. You insert a row? The trigger runs. You update a row? The trigger runs. Delete? Trigger.

So you write a trigger that watches your table and copies every change into a separate, "safe to read" shadow table. It's delta extraction with minimal app changes.

It sounds nice in theory, and it can work. But here's the catch: now every write to your table is doing double duty. First the original insert/update/delete, and then whatever your trigger is doing. That adds latency, which increases the I/O. As a result your writes are slower, your CPU is busier, and maintaining those triggers turns into its own kind of hell.

Still, some CDC tools out there do use triggers, though mostly legacy ones. Sometimes, it's the only viable option.

But thankfully, there's a better way now.

The Modern Way

Let's zoom out for a second. Your database is transactional, right? That means it already tracks every single change in a transaction log — also called a WAL, redo log, journal, whatever your DB vendor decided to name it.

This log is a complete, ordered, append-only stream of all changes ever made. It exists so the database can roll back transactions, recover from crashes, and maintain consistency.

The best part is that it's already there: you're paying for it whether you use it or not.

So why not just read the log?

That's the modern approach to CDC. Instead of using timestamps or triggers, we just read the transaction log directly. No extra writes. No schema changes. No application-level modifications.

The log becomes your source of truth for delta changes.

A CDC engine reads that log, picks out the changes you're interested in, and pipes them to a change table or directly to a target system like a data warehouse or data stream processor. All the inserts, updates, deletes — captured exactly as they happened, in order.

But for this to work, you have to parse that log correctly. Track transactions. Handle rollbacks. Know how to filter out internal database noise (things like index rebuilds, rewriting table due to CLUSTER/OPTIMIZE, system schemas updates, etc). In the early days, vendors would run an agent on the database host to read the log and dump the changes to disk or push them downstream.

Today, the game's changed. Modern CDC tools run off-box. They tap into the log remotely, stream it in-memory, process deltas on the fly, and send them wherever you need — Kafka, S3, Redshift, you name it.

This setup doesn't load your database. It doesn't interfere with normal operations. And it scales beautifully. It's efficient. It's real-time. And most importantly, it just works.

Building a Production-Ready CDC System

Okay, so you've decided to use CDC. You're sold on the concept. But before you pat yourself on the back and start wiring up pipelines, let's talk about what it really takes to make CDC production-grade.

Capturing deltas is the easy part. Making sure they land in the right place, in the right order, at the right time? That's where things get interesting.

First, ordering matters. If updates land out of order, your downstream system can end up in a totally inconsistent state. Imagine applying a delete before the insert. Or overwriting a change with an older value.

Second, you need delivery guarantees. At-least-once is the bare minimum. Ideally, you want exactly-once delivery, or something close to it. Because if even a single event goes missing — say a delete gets dropped or a critical update never lands — your whole target system can go out of sync. And good luck figuring that out three weeks later when a dashboard starts showing negative revenue.

Finally, flexible message formatting is a must. Not all downstream systems speak the same language. Some want Avro. Some want JSON. Some want flattened payloads, some want the full before/after row state. If your CDC pipeline can't translate between formats cleanly, you're going to spend a lot of time writing glue code.

So what architecture handles all of this gracefully?

Pub/sub with a message bus.

Every time a change happens in your source system, that change gets pushed to a message bus— Kafka, Pulsar, whatever you're into. Then, downstream systems can subscribe to those events and consume them however and whenever they want.

Why does this work so well?

Because it scales. The producer — your source system — just firehoses changes into the bus. And if one consumer can't keep up? Spin up more consumers. Need to fan out to multiple targets? No problem. Each one can subscribe independently and process the stream at its own pace.

More importantly, it decouples your systems. The source can change its underlying database, or move a dataset to another host, or even swap engines entirely. As long as it keeps pushing the same events to the bus, none of the downstream systems need to care. They're happily consuming events, blissfully unaware of whatever chaos is happening upstream.

This is what makes pub/sub CDC architectures production-grade.

They're fast. They're resilient. And they give you breathing room as your systems evolve. Because the source system will inevitably change. The schema will shift. The data format will mutate. If you didn't build your CDC system to handle that from day one?

Well, I hope you like replatforming under pressure.

Wrapping it up

Look, Change Data Capture isn't magic. It's not going to fix your broken data models, clean up your event schema, or rewrite your 3000-line Airflow DAG. It won't stop sales from asking for 14 versions of the same dashboard, each with a slightly different definition of "monthly revenue."

But what it does give you is breathing room. A reliable, scalable, low-impact mechanism to get fresh, accurate data out of your operational systems and into the places that matter.