Normalization is like designing a logical world; denormalization is making it livable.

— Some Wise Data Engineer

Ever wonder if your database design is holding you back? You've normalized every table, and your queries now crawl at a snail's pace. Maybe it's time to shake things up with denormalization?

Welcome to the other side of database design — denormalization.

What is Denormalization?

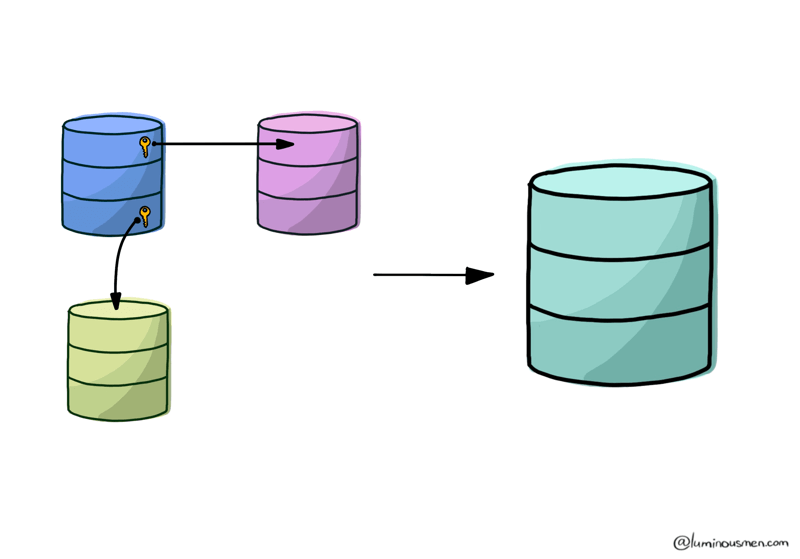

Denormalization isn't just breaking the rules for fun — it's a deliberate optimization strategy. While normalization aims to organize data into tidy, non-redundant schemas, denormalization trades a bit of that order for one critical goal: speed.

Speed can become a problem in highly normalized databases and it all because of joins.

Joins are the glue that holds normalized tables together, but they're not free. Every join operation requires the database to:

- Scan and fetch rows: The database retrieves matching data from multiple tables, often hopping across different parts of the disk. This disk I/O overhead slows queries down, especially if join keys aren't indexed.

- Match and combine rows: The engine compares data row by row, which can become resource-intensive with large datasets.

- Handle intermediate results: When the results of joins don't fit in memory, the database writes them to disk or recomputes them, adding further latency.

- Coordinate across nodes: In distributed databases, joins can involve shuffling rows across networked nodes, adding even more complexity and delays.

All of this works beautifully for small datasets or occasional queries, but when you're running millions of joins daily — say, in an analytics dashboard or reporting tool — performance bottlenecks quickly pile up.

The Example

Imagine a normalized database for an e-commerce application where products, orders, and customers are split across multiple tables. Each query to generate an order summary might require complex joins:

- Fetch the customer from

Customers. - Join it with

Orders. - Then, pull in products from

Products.

It's clean, but it's also slow when you're doing it millions of times a day. Denormalization simplifies this by, say, creating a pre-joined table (say, a OrderSummaries table) that contains all the relevant data upfront.

In a normalized database, your schema might look like this:

Orders Table

| OrderID | CustomerID | OrderDate |

|---|---|---|

| 1 | 101 | 2023-11-01 |

| 2 | 102 | 2023-11-02 |

Customers Table

| CustomerID | Name | |

|---|---|---|

| 101 | John Doe | john@example.com |

| 102 | Jane Smith | jane@example.com |

OrderDetails Table

| OrderID | ProductID | Quantity |

|---|---|---|

| 1 | 201 | 2 |

| 2 | 202 | 1 |

To fetch a single order with customer details and products, you'd need at least three joins. Now imagine running that query for every order in your warehouse reporting dashboard.

Instead, a denormalized schema might look like this:

OrderSummaries Table

| OrderID | CustomerName | CustomerEmail | ProductName | Quantity | OrderDate |

|---|---|---|---|---|---|

| 1 | John Doe | john@example.com | Widget A | 2 | 2023-11-01 |

| 2 | Jane Smith | jane@example.com | Widget B | 1 | 2023-11-02 |

Sure, there's redundancy here — John Doe's email is stored multiple times if he orders more than once. But querying this table is lightning-fast compared to normalizing everything.

By cutting down on joins, denormalization optimizes read-heavy workloads like reporting dashboards or user-facing analytics. Of course, this trade-off introduces redundancy and potential consistency challenges, but in the right use case, the performance gains are worth it.

When to Use Denormalization?

Denormalization, like any design decision, has its trade-offs. It increases data redundancy, which can boost performance but also requires more effort to maintain data consistency and increases storage cost. Enforcing referential integrity becomes tricky as related data is scattered across different tables.

So, what are the situations where denormalization comes to the rescue? Let's check out some common scenarios.

1. Too Many Table Connections

In highly normalized databases, you might find yourself joining a dozen or more tables with a single query. Each join is a costly operation, consuming resources and stretching execution time. Denormalization can be your savior here in a couple of ways.

Here's how:

- Combine related static tables. Merge tables containing reference data that change infrequently and are tightly coupled. For example, if you're constantly joining five or six small tables in your queries, combining them into one denormalized table could drastically reduce query time.

- Add redundant fields. Include commonly joined fields directly in a table. While this introduces redundancy, it can significantly boost performance.

2. Complex Calculations

Queries that involve complex calculations, especially aggregations or multi-step transformations, can be slow and resource-intensive. Sometimes, it's a good idea to add one or two extra columns to your table that store frequently used (and difficult to calculate) values.

Imagine you need to calculate the total cost of each order, which involves multiplying the quantity of each product by its unit price and then subtracting any discounts. Doing this calculation on the fly can take a lot of time, especially with a large number of orders. But if you precalculate this value and store it in a separate column, your queries may run much faster. Although it does require keeping the data in that column up-to-date.

3. Pre-Aggregating Frequently Used Data

In reporting-heavy systems, users often run repetitive queries to calculate metrics like totals, averages, or counts. Doing this computation on the fly every time can lead to bottlenecks, especially with growing datasets. Instead of calculating these sums dynamically, you could denormalize by storing pre-aggregated values in a summary table.

Here's how:

- Create a denormalized table with daily, weekly, or monthly totals.

- Update the table during ETL processes or using triggers.

This approach significantly speeds up reporting queries while reducing server load.

4. Simplifying Hierarchical Relationships

If your data involves complex hierarchical relationships (e.g., organizational structures, product categories), navigating these relationships in a normalized schema can be challenging and slow.

Imagine a content management system where articles are nested under categories, subcategories, and tags. A normalized schema would require multiple joins to retrieve all articles in a nested category.

Flatten the hierarchy into a denormalized table. Include a CategoryPath field (e.g., "Home > Electronics > Phones"), or a precomputed column with all descendant relationships. This denormalization simplifies and speeds up retrieval of hierarchical data at the cost of increased redundancy.

Modern Alternatives to Traditional Denormalization

The database landscape has evolved. Modern tools and architectures offer alternatives to old-school denormalization. Here's how you can rethink your approach:

1. Materialized Views

Materialized views are precomputed query results stored as tables. Platforms like PostgreSQL, Snowflake, and Databricks support them natively, making them an excellent alternative for summary data.

For example, instead of denormalizing order data into a summary table, create a materialized view that aggregates totals by user, product, or date. These views are automatically refreshed and optimized for fast reads.

2. NoSQL Systems

NoSQL databases like MongoDB and Cassandra are designed for denormalized, document-oriented storage. They shine in scenarios where you need to store complex, hierarchical data structures with minimal joins.

3. Caching Layers

Instead of denormalizing your database, consider caching expensive query results. Tools like Redis or Memcached can store precomputed results in memory, delivering sub-millisecond query times.

Balance database design

The decision to normalize or denormalize often is more of a set of guidelines. There might be parts of your operational database that you knowingly design to be denormalized. However, that doesn't mean you don't need to spend time designing your database.

The degree of normalization that you should apply to your data depends on your use case.

When we talk about OLTP systems (Online Transaction Processing) where we deal with lot of insert, delete and updates then it's a good idea to go with normalized tables.

But, if you're dealing with OLAP systems (Online Analytical Processing) where you need to deal with semi-structured data like analyze historical data, denormalized tables are your best friend. Since you won't be updating data here, having a bit of redundancy won't hurt, we wont end up in inconsistent state.

So, the most benefitial use of denormalization is in Reporting and Analytics and Read-Heavy applications: applications where the speed of read operations is critical to user experience.

Wrapping it Up

The essence of crafting a robust database boils down to forming a set of tables that accurately reflects the subject area and aligns with the demands of both that domain and the system you're building.

If you can't predict the pros and cons of denormalization in advance, it's a smart move to start with normalized tables model. Then, when you see performance bottlenecks with specific queries, that's the moment to consider denormalization to optimize those queries.