AI Fluency Expectations

⚠️ Terminology in the world is awfully screwed nowadays, especially in tech. In this blog post, we use the term ‘AI' while talking more about LLM because AI became equal to LLM lately. Don't blame me if you feel here.

We Got Tractors

Have you ever read about the first farmers to get tractors?

At first, they didn't replace all the horses. They parked the tractor next to the barn and argued whether it was worth the fuel.

Some folks tried it for a week, decided, "eh, my plow works just fine", and went back to doing things the slow way.

But here's the thing — you can't un-invent the tractor.

Once the neighbor's farm starts pulling twice the harvest with half the workers, you either adapt or you start renting out your land to those that do.

That's AI in software engineering right now.

It's not here to be a toy. It's not here to make your job "interesting" (or boring depending on how you use it). It's here to plow through the boring, the repetitive, the cognitive grunt work you've been wasting hours on.

And just like tractors didn't mean farmers stopped farming, AI doesn't mean engineers will stop engineering.

It means the definition of work changes.

Before tractors, "being a good farmer" meant knowing how to hitch the horses, pace the field, and repair a wooden plow (this is my assumption at least, I'm not a farmer [yet]).

After tractors came around, the baseline shifted — suddenly you had to know how to operate and maintain a machine, plan bigger fields, and think differently about the yield.

The people still shoveling by hand aren't "more authentic". They're just slower.

Just like a tractor cannot run without a driver, however, our new paradigm-shifting tool can't run without engineers (yet).

The Baseline Shift

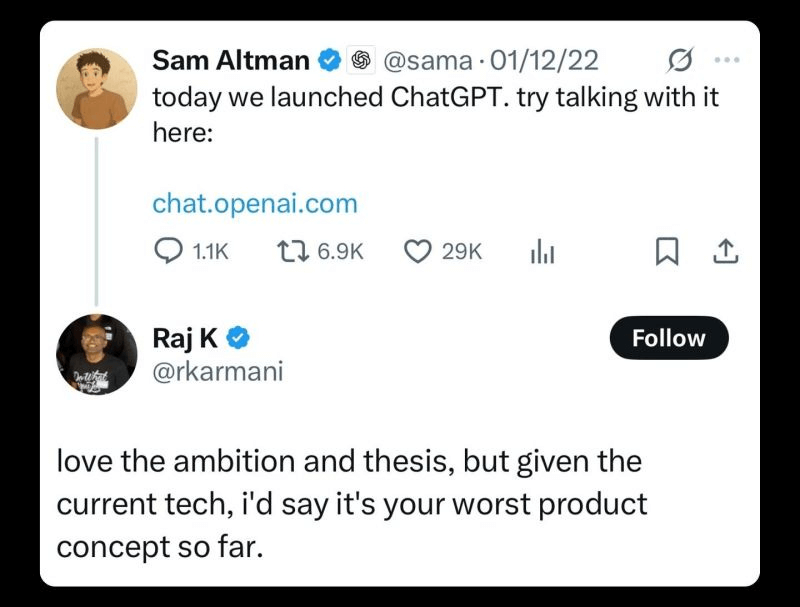

Remember how fast we went from LLMs being a "toy" to "production"? Two years. This is not emerging tech anymore — it's in our base toolbox. And I'm actually late writing about this — AI has been the norm for a while at this point.

Companies are figuring out how to apply it, in any and every way they can. They're changing processes, revising competency matrices, quietly redefining grades, and raising the baseline level of AI literacy across not just engineers, but designers, analysts, lawyers, HR, and everyone else. That leads us to a new normal — AI fluency expectations.

Typing speed never really mattered much in software engineering (no one ships code at stenographer-speed), but thinking speed was always a bottleneck. Now, the bottleneck has shifted — the scope of "machines doing grunt work" has exploded from procedural to creative tasks. And that's never been true before.

For us software engineers, what's changed is that you can now offload significant cognitive grunt work — messy, open-ended tasks you used to do manually: exploring the new codebase, parsing 500 lines of logs, writing a new API's documentation, writing tests for a legacy Java monolith, writing the first draft of a migration script, etc. This means increased development speed and cost savings (tokens aren't free, but dev time is more expensive).

AI fluency is becoming a hiring standard. Companies are explicitly testing candidates on their ability to use GenAI tools (one, two). Hiring rubrics are now grading engineers on this, the same way we've always graded system design or debugging skills.

Now We Have to Talk

If you've been working as an engineer for over a year, you already know how to tell a computer what to do. Through code, configs, pipelines, data graphs, whatever.

Now, that's not enough. You have to explain things to a model that doesn't compile, doesn't validate, doesn't scream at you with syntax errors — but can generate both a solution and a bug at the same time.

That's both its problem and its power. It's like hiring a junior dev who writes code at the speed of thought but can't think at all. Your job isn't just to "assign a task", but to set intent, give context, define boundaries — and then check the result.

To be fair, LLMs are quite good at writing code. They're also reasonably good at updating code when you identify the problem to fix. They can also do all the things that real software engineers do: read the code, write and run tests, add logging, and use a debugger.

But what they cannot do is build and maintain clear mental models. And that difference matters. It's the line between treating AI like a clever intern and treating it like a true execution layer in your stack — and you need a new kind of literacy to use it.

AI Fluency — What It Really Means

This is a premium deep-dive

You just read the free excerpt. The full analysis continues on Substack.

Read full article → Or subscribe to get all premium posts